[Avg. reading time: 12 minutes]

Concurrency & Parallelism

Process

Definition

A process is an independent program in execution.

- Has its own memory space

- Is isolated from other processes

- Managed by the operating system

Examples

- Chrome browser

- VS Code

- Python script running

Each of these runs as a separate process. Processes do NOT share memory by default.

Thread

Definition

A thread is a unit of execution inside a process.

- Multiple threads can exist inside one process

- Threads share the same memory

- Managed by the OS

Key Idea

Threads are like workers inside a process.

- Faster than processes

- Can run in parallel

- But shared memory = risk (data races)

Example

A single application can have:

- UI thread

- Network thread

- Worker thread

Threads are not CPU Cores. But they use Cores

Task

Definition

A task is a piece of work to be executed.

- It is NOT tied to threads or processes

- It is an abstraction

Key Idea

A task is WHAT to do

A thread is WHERE it runs

Examples

- “Read this file”

- “Process these 1M rows”

- “Call this API”

Differences

Process

├── Thread

├── Executes Tasks

- Process : container

- Thread : worker

- Task : actual work

| Concept | What it is | Memory | Cost |

|---|---|---|---|

| Process | Independent program | Separate | High |

| Thread | Worker inside process | Shared | Medium |

| Task | Unit of work | N/A | Lightweight |

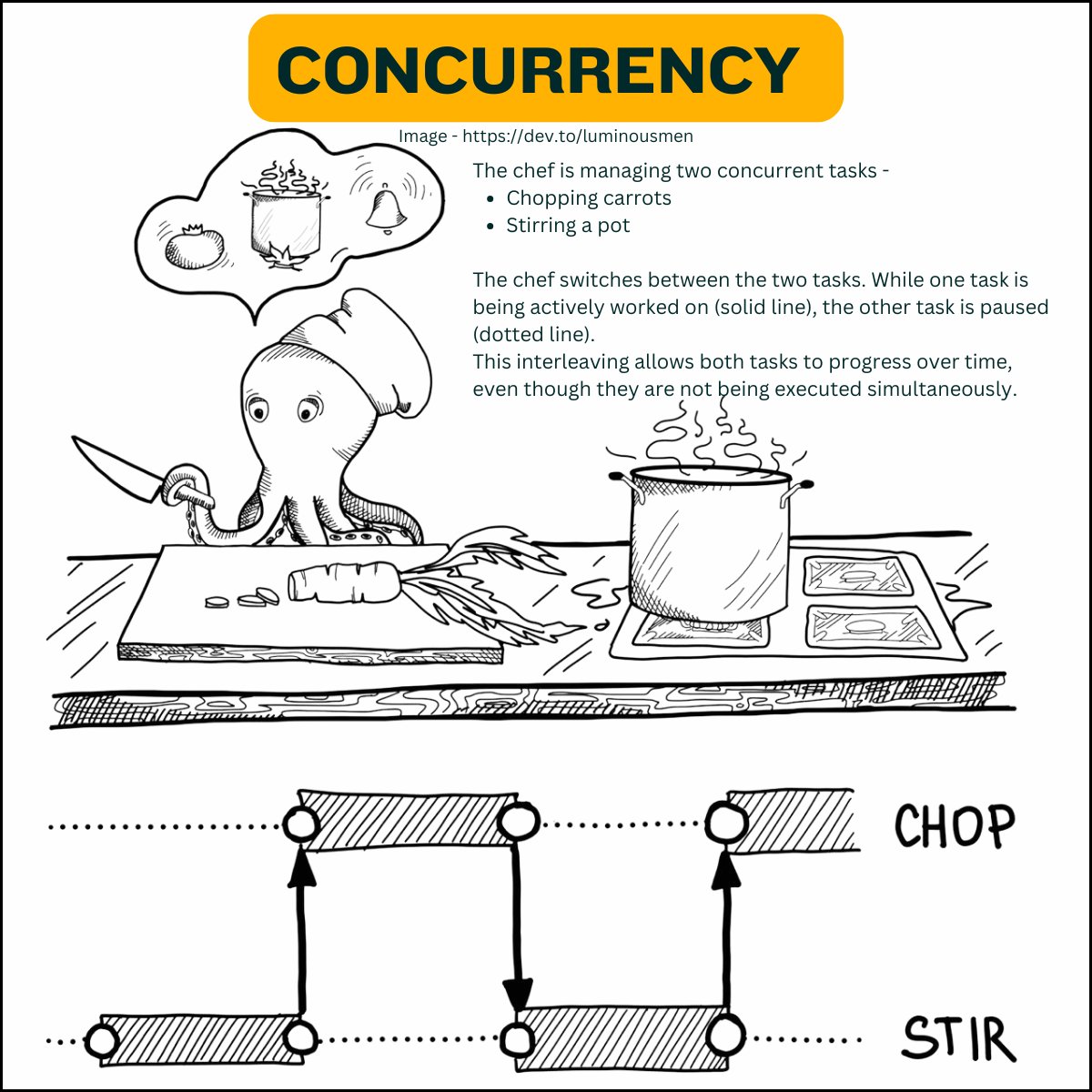

Concurrency and parallelism are both about handling multiple tasks at the same time, > but they approach the problem differently.

Concurrency

Definition

-

Multiple tasks are in progress at the same time

-

Not necessarily running simultaneously

-

Focus: coordination and efficient waiting

-

One CPU core

-

Task switching

-

Avoid idle time

Data Engineering Example

- Reading from Kafka

- Calling APIs

- Writing to databases

This is IO-bound work.

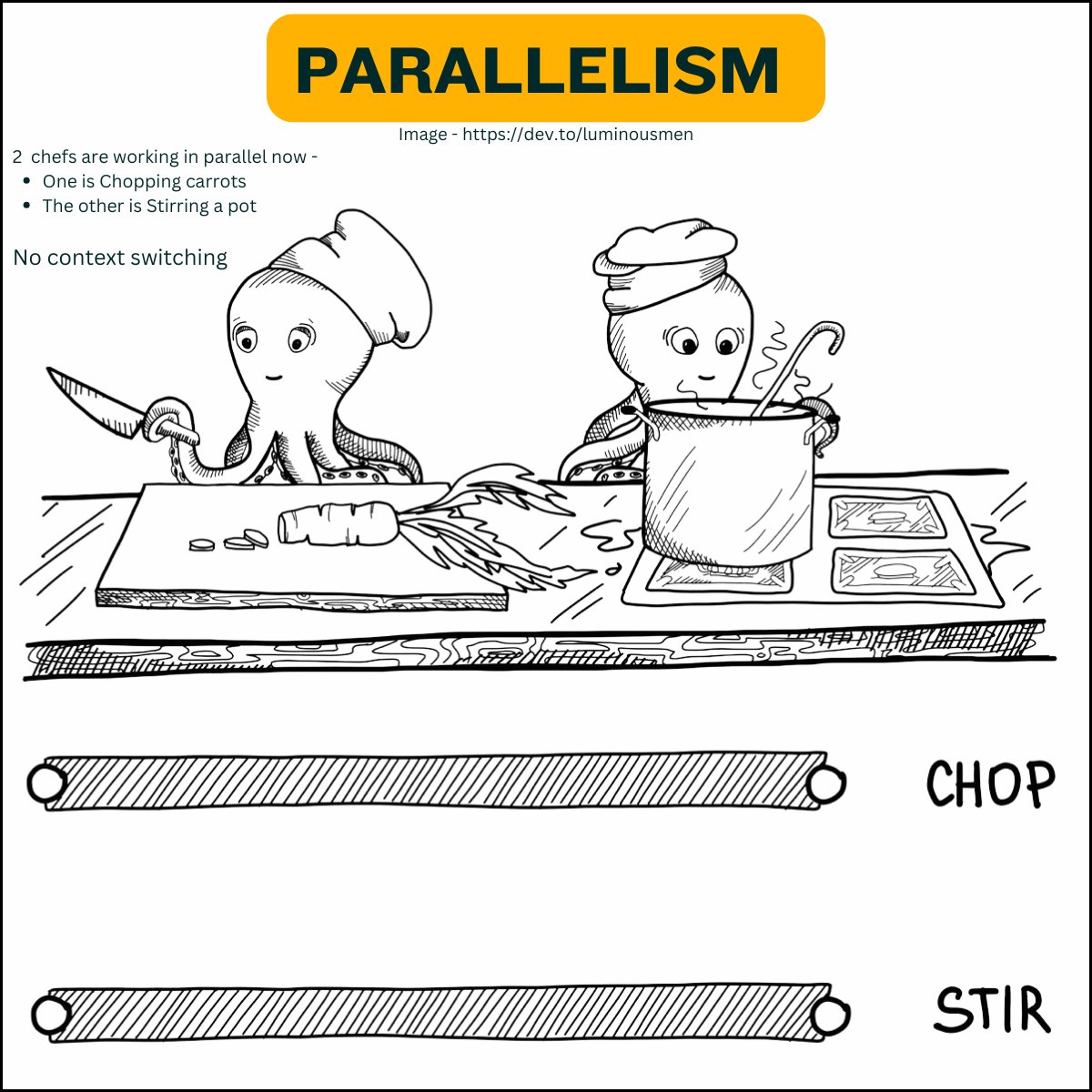

Parallelism

Definition

-

Multiple tasks executed at the same time

-

Uses multiple CPU cores

-

Focus: speed through computation

-

Many CPU cores

-

Same operation applied across data

Data Engineering Example

- Aggregations

- Filtering large datasets

- Feature engineering

This is CPU-bound work.

Key Difference

- Concurrency solves waiting problems

- Parallelism solves compute problems

Rust Implementation

Concurrency in Rust

Rust achieves concurrency through threads and async/await.

Threads: Rust’s standard library provides native support for threads, which are the basic unit of concurrency.

- True parallel execution (multi-core)

- Low-level control

use std::thread; fn main() { let handle = thread::spawn(|| { // This is the code that will run in a new thread. for i in 1..10 { println!("Hello from the spawned thread! {}", i); } }); // Meanwhile, the main thread continues. for i in 1..5 { println!("Hello from the main thread! {}", i); } // Wait for the spawned thread to finish. handle.join().unwrap(); }

Async/Await: Rust’s async/await syntax allows you to write asynchronous code that looks like synchronous code.

- Efficient for IO-bound tasks

- Non-blocking execution

use tokio; // Using the Tokio async runtime #[tokio::main] async fn main() { let future1 = async_task(); let future2 = async_task(); // `join!` runs multiple futures concurrently tokio::join!(future1, future2); } async fn async_task() { // Some asynchronous work println!("Doing async work"); }

Parallelism in Rust

Rust achieves parallelism primarily through the Rayon library, which provides data parallelism through easy-to-use abstractions.

Rayon: Rayon makes it easy to parallelize data processing tasks.

Key Points

Safety: Rust ensures thread safety and data race prevention through its ownership and borrowing system.

Ease of Use: Libraries like Rayon and Tokio make it easier to work with concurrency and parallelism without delving too deep into low-level details.

- Automatic parallel execution

- Ideal for data processing

use rayon::prelude::*; fn main() { let data = vec![1,2,3,4,5,6,7,8]; let result: Vec<i32> = data .par_iter() .map(|x| x * 2) .collect(); println!("{:?}", result); }

Demo

git clone https://github.com/gchandra10/rust-concurrency-parallelism.git